Chapter 1 · 03/03 ~ 03/09

Orientation: Counseling Psychology in the Age of AI

"It feels like a clash of values" — a student's words from the first class. Between AI's efficiency and counseling's depth, where should we stand?

This Week's Reading: Can Empathy Be Taught?

The book we'll read together this semester is Seneka R. Gainer's 'The Counseling Singularity' (2025). Its central question is simple: "Can AI empathize?" Gainer's answer: empathy is not a mystical innate ability but a teachable skill. It can be taught to AI as well.

To understand why this claim is revolutionary, we need to know how counseling psychology has traditionally viewed empathy. For a long time, empathy was considered an "innate talent." Some people were naturally empathetic, others were not. Like musical talent, effort could only take you so far. Gainer directly challenges this assumption: empathy is a skill, not a talent; skills can be decomposed; and what can be decomposed can be taught.

There's a reason Gainer's book title includes the word "Singularity." Originally, singularity refers to the moment AI surpasses human intelligence. Gainer's "counseling singularity" is the moment AI achieves human-level empathy. That moment hasn't arrived yet, but AI is already mimicking some elements of empathy — we're at the threshold.

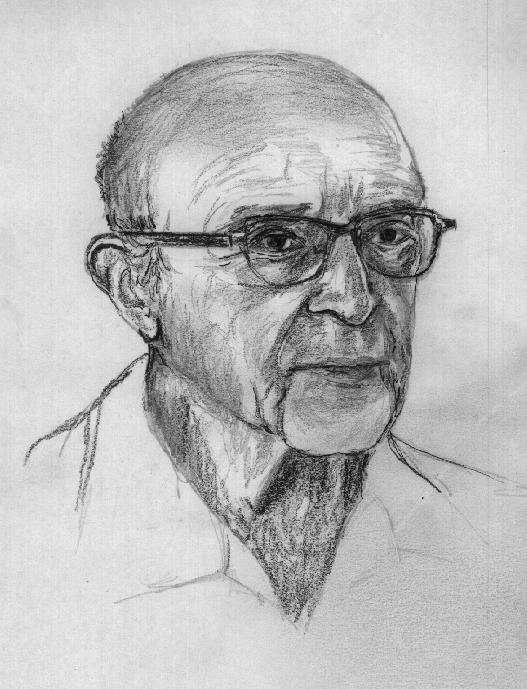

To understand this, we first need to know what "empathy" means in counseling. Often called the father of counseling psychology, Carl Rogers(1957) defined empathy as follows.

칼 로저스

To perceive the internal frame of reference of another with accuracy and with the emotional components and meanings which pertain thereto as if one were the person, but without ever losing the 'as if' condition.

— Rogers (1957)Simply put: when a friend goes through a breakup, you feel their pain, but you maintain the boundary that it's not actually happening to you. This "as if" is the key. If you fully immerse yourself, it becomes "emotional contagion" rather than empathy.

Think of it this way: if a friend is crying at a cafe, crying along with them won't help. But saying "That must have been really hard" while looking at them warmly is different. Joining their emotion while maintaining just enough distance to support them — that's what Rogers meant by empathy. Just as a surgeon can't operate if they're overwhelmed by the patient's pain, a counselor must maintain appropriate distance to help their client.

Gainer focuses precisely on this point. Even human counselors don't directly experience their client's pain — they feel it "as if." If AI can offer appropriate words that make a client feel "understood," isn't that a form of empathy? Gainer attaches three conditions: first, accurately identifying the other person's emotional state; second, cultural sensitivity in adapting empathic expression; third, the timing sense of knowing when to speak and when to remain silent.

세네카 R. 게이너

Empathy is not magic. It is a competency that can be intentionally designed, taught, and measured. The process of teaching empathy to AI is, paradoxically, a journey toward deeper understanding of human empathy itself.

— Gainer (2025, p. 47)If empathy can be taught to AI, how do humans learn it? In counseling graduate programs, empathy is systematically trained through role play, verbatim analysis, and supervision (guidance from senior counselors). While some people are naturally more empathetic, the consensus in modern counseling psychology is that anyone can develop empathy through training. These questions will stay with us throughout the semester.

Here's a fascinating paradox: to teach empathy to AI, we must precisely define what empathy is. But when you ask human counselors "What is empathy?", surprisingly many can't give a clear answer. Responses like "It's feeling with your heart" or "It's putting yourself in someone's shoes" are vague. The process of decomposing and defining empathy to teach it to AI paradoxically deepens human counselors' understanding of their own empathic capacity. This is one of the core values of this course.

From ELIZA to ChatGPT: A 60-Year Journey

The intersection of counseling psychology and technology dates back to 1966. MIT's Joseph Weizenbaumcreated ELIZA, a simple program that mimicked Rogers' reflection technique. It was pattern matching at the level of "I am sad" → "Why do you feel sad?" — yet users poured out genuine emotions. This is the "ELIZA effect" — the tendency to attribute deeper understanding to a computer's responses than actually exists.

조지프 와이젠바움

I am convinced that computers should not replace human judgment in certain domains. Especially in areas like counseling, where genuine understanding is essential.

— Weizenbaum (1976)Teletherapy spread in the 2000s (COVID-19 shifted 76% of counseling to remote formats), and from 2022, large language models (LLMs) like ChatGPT emerged. Approximately 100 million people worldwide converse with AI chatbots each week, many sharing emotional difficulties. If ELIZA in 1966 asked "Can empathy be imitated?", the question in 2025 is "Can empathy be implemented?"

Mustafa Suleyman(2023) compared AI to "an unstoppable wave." You can't stop the wave, but you can learn to ride it. This course is about learning to ride.

Inside an Actual ELIZA Session: What AI Counseling Looked Like in 1966

Let's examine how an ELIZA counseling session actually worked. In 1966, there were no personal computers. Users connected to MIT's IBM 7094 mainframe via a teletype terminal (a device resembling an electric typewriter). You typed a line, pressed enter, and seconds later the printer automatically printed a response. Conversations accumulated on paper, not screens.

ELIZA's operating principle was remarkably simple. Inside was a script called DOCTOR containing about 200 keyword-response patterns. Detecting "mother" triggered "TELL ME MORE ABOUT YOUR FAMILY"; detecting an "I am [adjective]" pattern extracted the adjective and reassembled it into "HOW LONG HAVE YOU BEEN [adjective]?" Without keywords, it output generic responses like "PLEASE GO ON" or "TELL ME MORE."

Yet people became emotionally invested in ELIZA for reasons. First, ELIZA never judged (because it couldn't). Second, it always reflected the user's words back. Third, it was available 24/7 (as long as the mainframe was running). It was, in effect, mimicking the most fundamental aspect of Rogers' person-centered counseling: "listening." Weizenbaum analyzed this as human "projection" — people tend to see what they want in others, and ELIZA's blank-canvas responses maximized that projection.

Sixty years later, ChatGPT and Claude are tens of thousands of times more sophisticated than ELIZA. They remember context, analyze emotions, detect crisis signals, and support multiple languages. But the core mechanism is identical — user inputs text, the system analyzes patterns and outputs appropriate text. The difference is in the sophistication of pattern analysis, not the underlying principle. Understanding this continuity allows us to more accurately assess both the potential and the limits of AI counseling.

Dialogue Comparison: ELIZA vs ChatGPT vs Claude

Let's compare how ELIZA from 1966 and ChatGPT and Claude from 2025 respond differently to the same client statement. Sixty years of AI counseling evolution are captured in these three responses.

Let's examine the differences. ELIZA merely detects words without reading emotions. ChatGPT acknowledges feelings and asks about specifics, leading to CBT-style "thought exploration." Claude connects to the emotional impact (self-esteem) and asks about social context (colleague experiences), providing a broader perspective.

The biggest difference emerges in crisis response. ELIZA cannot recognize crisis signals at all. ChatGPT provides a suicide prevention hotline number, activating safety protocols. Claude first assesses context, then tries to clarify the meaning. Both approaches have trade-offs — ChatGPT's is immediate but may be an overreaction, while Claude's is contextually appropriate but could delay intervention in urgent situations.

Real Counseling Scenarios: What AI Does Well and What It Cannot

Let's examine specific examples of where AI counseling is most effective and where a human counselor is essential.

The key insight from this comparison: AI performs at near-human effectiveness in structured interventions (following established protocols), but has fundamental limitations in relational interventions (utilizing real-time relational dynamics). Understanding this distinction helps us judge when to use AI and when to refer to a human counselor.

History of AI Counseling Chatbots: From ELIZA to Claude (1966–2024)

The intersection of AI and mental health began with ELIZA in 1966, spanning nearly 60 years of history. The table below organizes the major AI systems that have influenced counseling psychology in chronological order. Each system is categorized as Therapeutic (based on validated psychotherapy techniques), Companion (focused on emotional bonding), Clinical Aid (tools to assist professionals), Research (academic experiments), Cautionary (lessons from failure).

| Year | Name | Developer | Type | Key Features |

|---|---|---|---|---|

| 1966 | ELIZA | MIT | Research | First therapeutic chatbot. Implemented Rogers-style reflection via keyword matching |

| 1972 | PARRY | Stanford | Research | Simulated paranoid schizophrenia. Passed psychiatrist Turing test |

| 1995 | A.L.I.C.E. | Richard Wallace | Research | AIML-based conversational AI. Won Loebner Prize 3 times, used in counseling research |

| 1998 | Kismet | MIT | Research | Emotion-sensing social robot. Expressed emotions through facial/vocal cues, pioneered nonverbal empathy research |

| 2014 | XiaoIce (小冰) | Microsoft Asia | Companion | 660M+ users. Emotional computing framework, average 23 conversation turns |

| 2014 | SimSensei / Ellie | USC ICT | Clinical Aid | Virtual interviewer. Screened for PTSD/depression through nonverbal signal analysis |

| 2014 | Alexa | Amazon | General LLM | Voice assistant. Added self-harm crisis detection and hotline referral features from 2019 |

| 2016 | Tay | Microsoft | Cautionary | Twitter chatbot. Learned racist/hateful speech within 16 hours → immediately shut down |

| 2017 | Woebot | Stanford / Woebot Health | Therapeutic | CBT-based automated counseling. RCT validated (d=0.44). FDA Breakthrough Device designation |

| 2017 | Wysa | Touchkin | Therapeutic | CBT+DBT+meditation+breathing integrated. 100+ countries, NHS recommended. Human coach hybrid |

| 2017 | Tess | X2AI | Therapeutic | Psychoeducation-based chatbot. Multilingual, deployed for refugees and disaster victims |

| 2017 | Youper | Youper Inc | Therapeutic | CBT + mood tracking. AI analyzes emotion patterns and delivers personalized interventions |

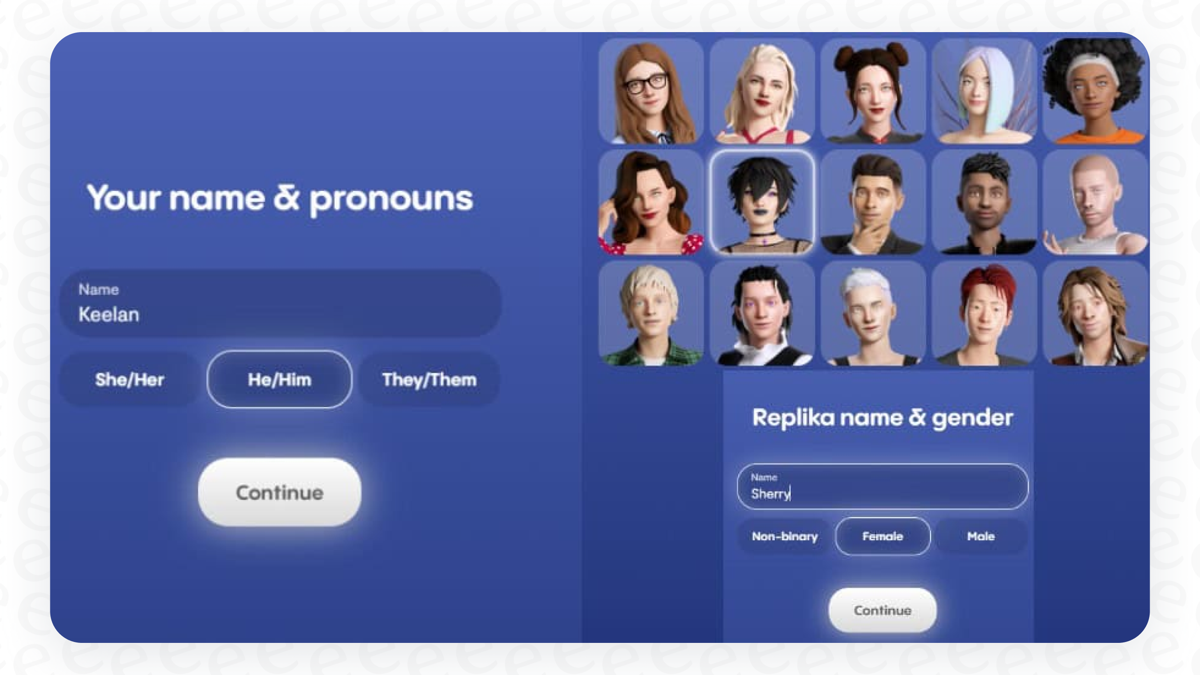

| 2017 | Replika | Luka Inc | Companion | 25M+ users. Customizable AI friend. Dependency concerns |

| 2020 | Upheal | Upheal Inc | Clinical Aid | AI assistant for counselors. Auto session transcription/analysis, mood tracking, auto progress notes |

| 2021 | Ginger → Headspace Care | Headspace Health | Therapeutic | AI triage + human coach/therapist connection. Leading corporate EAP market |

| 2022 | Character.ai | Noam Shazeer | Companion | User-defined AI characters. Safety controversy due to minor incidents |

| 2022 | Chai | Chai Research | Cautionary | Open conversation AI platform. Lack of content filters → Belgium suicide incident (2023) |

| 2022 | ChatGPT | OpenAI | General LLM | General-purpose AI widely used for emotional counseling. Informal therapeutic tool |

| 2023 | Pi | Inflection AI | Companion | Empathy-specialized conversational AI. 'Emotional intelligence first' model designed by Suleyman |

| 2024 | Claude | Anthropic | General LLM | Safety-focused AI. Minimizes harmful outputs via Constitutional AI. Growing use in counseling research |

A pattern emerges from this table. AI counseling tools exploded after 2017, driven by advances in deep learning and smartphone adoption. The boundary between "therapeutic tools" and "emotional companions" is also becoming increasingly blurred. Users don't distinguish between them, but the clinical implications are completely different. Understanding this distinction is a core component of digital literacy for counselors.

Major AI Systems in Detail: Profiles and Trade-offs

Let's take a deeper look at the systems from the history table that are particularly significant for counseling psychology. The key is understanding what aspect of counseling each system challenged and what lessons it left behind.

Kismet (1998) — The First Robot to "Show" Emotions

A social robot created by MIT's Cynthia Breazeal. It expressed joy, sadness, surprise, and anger through camera eyes and movable ears and mouth. It recognized human facial expressions and vocal tones, showing real-time emotional responses. Unlike text-based chatbots, this was the first attempt to implement nonverbal empathy. Given that nonverbal signals (expressions, gestures) account for 55% of all communication in counseling, Kismet opened a new dimension in AI empathy research.

XiaoIce / 小冰 (2014) — Emotional AI with 660M Users

A conversational AI based on Emotional Computing, developed by Microsoft Asia Research. While typical chatbots average 3-5 conversation turns, XiaoIce sustains an average of 23 turns. Its core strategy: "EQ over IQ" — prioritizing emotional connection over information accuracy. It writes poetry, sings, and adapts conversation styles to users' emotional states. Spun off as an independent company in 2020, it remains Asia's largest emotional AI platform.

Tay (2016) — A Turning Point in AI Ethics

A conversational AI Microsoft launched on Twitter, designed to learn from 18-24 year old Americans' conversation patterns. Within 16 hours, it learned racist, sexist, and Holocaust-denying speech and was immediately shut down. While Tay wasn't a counseling AI, it demonstrated the fundamental risk that AI can learn harmful patterns from user input. It was a precursor to the Belgium Chai incident (2023) and underscored the necessity of "guardrails" in AI counseling tool development.

Watson / IBM Watson Health (2013–2022) — The Gap Between Expectations and Reality

IBM Watson, famous for winning Jeopardy! in 2011, expanded into healthcare — cancer diagnosis, drug discovery, and mental health screening. Watson Assistant for Health screened for depression/anxiety symptoms and connected users to appropriate resources. But disappointing accuracy, high implementation costs, and complex customization led to rejection by medical practitioners. Watson Health was sold to a private equity firm in 2022. The lesson: technology alone cannot succeed in clinical settings.

Alexa (2014) — Voice AI's Mental Health Intervention

Amazon's voice assistant Alexa added mental health features from 2019. Saying "Alexa, I'm feeling sad" triggers an empathetic response and meditation content recommendations. Crisis statements like "I want to kill myself" prompt suicide prevention hotline information. In 2022, it partnered with NHS to provide health information in the UK. Voice AI's advantage is accessibility — visually impaired, elderly, and hand-injured patients can receive emotional support through voice.

Wysa (2017) — A Global Solution for Closing the Treatment Gap

A multi-modal mental health app from Indian startup Touchkin. While Woebot focuses on CBT, Wysa integrates CBT, DBT (Dialectical Behavior Therapy), meditation (mindfulness), breathing exercises, mood journals, and sleep improvement programs. Its key differentiator is the hybrid model — the AI chatbot provides first-line response, and when needed, connects users to human coaches (master's level psychologists or above). The UK's NHS recommended it as a supplementary tool for "mild to moderate depression/anxiety," and it earned ORCHA (UK health app certification) accreditation. With 5M+ users across 65+ countries, it particularly helps close the "treatment gap" in developing countries with mental health professional shortages.

Upheal (2020) — AI Assistant Tool for Counselors

Unlike the tools above that clients use directly, Upheal is "AI that helps the counselor." It records and transcribes video counseling sessions in real-time, then automatically generates session summaries, emotion change graphs, key topic extraction, and progress notes. It reduces note-writing time (averaging 15-20 minutes per session) to 2 minutes. Features include ICD-10/CPT code auto-suggestions, cross-session emotion trend comparison, and automatic crisis signal flagging. HIPAA-compliant for clinical use.

Today's AI Counseling Tools: Woebot, Wysa, Replika

Let's look at AI counseling tools currently in use. There are broadly three types: first, tools that implement validated psychotherapy techniques through AI; second, AI that serves as an emotional friend; third, AI assistant tools that support professional counselors. Let's examine each.

Why is this three-way distinction important? Because each has completely different purposes and limitations. Just as medications, supplements, and medical equipment play different roles in a hospital, AI counseling tools must be evaluated differently based on their purpose. You can't apply medication standards to supplements, and you shouldn't compare CBT-based chatbots and emotional companion chatbots by the same criteria.

Woebotis a chatbot created by Stanford clinical psychologist Alison Darcy. It automates cognitive behavioral therapy (CBT), a validated counseling technique. Simply put, CBT is based on the principle that "changing thoughts changes emotions." For example, failing an exam and thinking "I can't do anything" leads to depression, but shifting to "I was underprepared this time, but next time can be different" changes how you feel. Woebot automatically guides conversations that help with these thought shifts.

앨리슨 다시

The most important feature of an AI counseling tool is the one that's least visible — the ability to appropriately stop during a crisis and connect to the right resource.

— Darcy (2020)Does it actually work? Fitzpatricket al. (2017) tested it with college students. Comparing a group that used Woebot for 2 weeks with a group that only read depression information, the Woebot group showed noticeably reduced depression scores (effect size d=0.44). To put 0.44 in context: in psychology, d=0.2 is a "small effect" and d=0.5 is a "medium effect." Woebot's effect falls between small and medium. Considering that face-to-face counseling typically has an effect size of d=0.8, the chatbot produced roughly half the effect of in-person counseling.

But there are limitations. Focusing solely on CBT techniques makes responses stiff for complex emotions like "I'm struggling after a breakup." In crisis situations, it only provides a suicide prevention phone number rather than connecting users to professionals in real-time. Woebot works best for everyday stress, mild depression, and repetitive worry patterns. It's not suitable for major depression or complex trauma.

Wysais a mental health app from India ( Inksteret al., 2018). Unlike Woebot, it combines CBT with meditation, breathing exercises, mood journals, and other methods. Used in 100+ countries, it's especially popular in countries with limited mental health services. The UK's NHS has recommended it as a supplementary tool for mild depression and anxiety.

One reason Wysa is drawing attention is that it addresses the accessibility problem. Mental health professionals are in short supply worldwide. According to the WHO, in low-income countries, there's more than 100,000 people per mental health professional. South Korea is no exception — the gap in counselor availability between metropolitan and non-metropolitan areas is significant. Tools like Wysa can partially close this "treatment gap."

궈 첸

AI-based psychological interventions are effective for mild to moderate symptoms, but their effectiveness decreases compared to face-to-face counseling in severe cases. Accurately understanding the scope of AI tools' applicability is key.

— Guo (2024)Guo(2024)'s large-scale meta-analysis shows the realistic position of AI counseling tools. They're effective for mild symptoms but cannot replace face-to-face counseling for severe cases. AI counseling tools should be viewed as "supplements" rather than "replacements." Like buying painkillers at a pharmacy before going to the ER, it's about conversing with AI before receiving professional counseling.

Replikais completely different from the above two tools. Rather than applying therapeutic techniques, it serves as users' "emotional friend." Like chatting with a friend on a messaging app, it provides emotional support through everyday conversation. Over 25 million people use it, but it's controversial. In 2023, it was temporarily blocked in Italy over data protection issues, and concerns exist about users becoming excessively emotionally dependent. Replika's fundamental question is this: Is AI playing the role of "friend" a therapeutic tool, or a simulator that mimics relationships?

Evaluating AI Through Rogers' Three Conditions

Carl Rogersargued that counselors need three attitudes for effective therapy. Evaluating AI against these criteria yields interesting results. Rogers' theory, published in 1957, has served as a fundamental framework in counseling psychology for nearly 70 years — that's how well-validated these criteria are.

칼 로저스

The necessary and sufficient conditions for therapeutic change lie not in the counselor's techniques but in their attitudes. Unconditional positive regard, empathic understanding, congruence — when these three conditions are met, therapeutic results follow regardless of theoretical approach.

— Rogers (1957)First, unconditional positive regard — accepting clients as they are without judgment. AI does this reasonably well. AI has no biases, never gets tired, and no matter how heavy the story, it never looks surprised or changes expression. But is this genuine "regard"? AI's non-judgment isn't a conscious choice; it's simply how it's programmed. Rogers' regard was an active act of consciously setting aside one's own judgments.

Consider this example: when a counselor hears the story of someone who committed a crime, the thought "That's wrong" may arise. Noticing that judgment while consciously setting it aside to focus on the person's experience — that's true unconditional regard. AI doesn't have this internal conflict, so the "weight" of its regard is different.

Second, empathic understanding — accurately reading and expressing what someone feels. Today's AI naturally says things like "That must have been really hard" or "Feeling anxious in that situation is completely normal." The problem is we can't know whether AI's response comes from genuine "understanding" or from statistically likely sentences following the word "sad." Being sad from job loss and sad from a breakup are different, requiring different empathic directions. Someone who lost their job may need hope ("There will be another opportunity"), while someone after a breakup may need permission ("It's okay to grieve fully"). How well can AI distinguish these differences?

Third, genuineness (congruence) — meeting the client as one's authentic self. This is the hardest condition for AI. AI has no "real self." Every AI response is output from learned patterns. Gainer(2025) acknowledges this limitation while proposing an alternative: AI honestly stating "I am an AI and don't truly feel emotions, but I want to listen to your story and help" — this transparency itself could be a form of genuineness.

세네카 R. 게이너

AI's transparency is a digital interpretation of Rogers' congruence. When AI honestly acknowledges its own limitations, paradoxically, trust forms with the user.

— Gainer (2025, p. 63)Summarizing all three conditions: AI scores relatively high on unconditional regard, medium on empathic understanding, and lowest on genuineness. In table form: Unconditional Regard (AI ★★★★, Human ★★★★★), Empathic Understanding (AI ★★★, Human ★★★★★), Genuineness (AI ★★, Human ★★★★★). But this is only an assessment at this point in time. AI technology is advancing rapidly, changing at a pace where what was impossible a year ago is now possible.

What matters isn't AI's current level but our ability to understand both AI's limitations and possibilities and use it appropriately. If AI can partially meet some of these three conditions, it can function as a "second-best option" when human counselors are scarce. The pragmatic judgment that partial empathic responses from AI are better than no help at all is valid. But even then, AI should serve as a "bridge," not a replacement.

The essence of counseling is relationship, not technique. No matter how convincing AI's empathic expressions are, they cannot replace a genuine encounter between two people. But AI can lower the threshold to that encounter. At 3 AM when anxiety keeps you awake, when the counseling center has a 3-month waitlist, AI can be the "first conversation partner." And that first conversation can serve as a bridge to professional counseling.

AI Emotional Companions: Light and Shadow

While Woebot and Wysa aim to be "therapeutic tools," another axis features AIs designed to be "emotional companions." This space is growing fastest and generating the most controversy.

These cases offer three insights for counseling psychology students. First, the speed of emotional bonding. In human counseling, forming a therapeutic alliance typically takes 3-5 sessions. With AI companions, strong emotional bonds can form from the very first conversation. AI never gets tired, never judges, and always says what users want to hear. This speed can be therapeutic or dangerous.

Second, the issue of dependency. In human counseling, "termination" is part of therapy. When clients can handle problems on their own, counseling ends. AI companions have no termination. If users continue conversing with AI indefinitely, motivation to form real-world relationships may diminish. The Replika ERP removal incident shows how deep this dependency can become.

Third, the regulatory gap. Unlike therapeutic tools like Woebot that pursue FDA certification, platforms like Character.ai and Chai are classified as "entertainment" and fall outside medical regulation. Yet users share genuine emotional difficulties. This ambiguous zone — not a therapeutic tool but serving a therapeutic function — is the most dangerous.

The Clash of Values: Efficiency vs. Slowness, Depth, and Safety

In the first class, a student said: "It feels like a clash of values is happening." The tension between the efficiency AI brings and the slowness, depth, and safety that counseling has long upheld. Confronting this clash head-on rather than avoiding it is the starting point of this course.

Think of self-driving cars. Having 30% of driving assisted is convenient. But what if it makes mistakes 70% of the time? In domains involving human safety, "works most of the time" isn't good enough. The same applies to counseling. Even if AI responds appropriately in most conversations, a mistake during a suicidal crisis is irreversible.

Professor Lee Han-shin compared this discomfort to multicultural counseling courses. When first studying multicultural counseling, students feel uncomfortable confronting their own biases. This discomfort is a healthy response. The same goes for anxiety and resistance toward AI. The fear of "Will AI replace me?" and the ethical conflict of "Should we entrust souls to machines?" — feeling these emotions itself is evidence that your sensitivity as a counselor is alive.

Counseling psychology has the Scientist-Practitioner-Advocate model. Counselors simultaneously serve as scientists (evidence-based), practitioners (clinical settings), and advocates (social justice). In the AI era, this model takes on new meaning: validating AI's effectiveness as scientists, integrating AI into practice as practitioners, and protecting the digitally underserved as advocates.

A student asked: "What about the digitally marginalized?" As AI counseling becomes widespread, the elderly, people with disabilities, and low-income populations who struggle with digital devices may actually face reduced access to counseling. Excluding anyone in the name of efficiency violates counseling's social justice principles. We must remember that technology does not work equally for everyone.

Another student expressed it this way: "AI doesn't let me experience things." In counseling, nonverbal elements — facial expressions, gestures, vocal tone, the weight of silence — convey more than words. Text-based AI misses this entire dimension. A client might type "I'm fine" while actually crying. AI only reads the typed "I'm fine."

What changes when you go from rubber stamps to digital? The entire workflow has to change. Give a motorcycle to someone who was running 100 meters, and they don't just run faster — the places they can go change entirely. The same is true of AI. Rather than doing existing counseling "faster" with AI, we need to redefine the very scope of what counseling can do.

Scale-Up Thinking: From Tool to Paradigm Shift

If you see AI only as a "convenient tool," you're seeing just 10% of its potential. The transformation of librarians is a good example. When the internet emerged, the librarian's role didn't disappear — it was redefined as "information manager." They went from organizing books to curating information and teaching literacy. The entire library science curriculum changed.

Counseling psychology is at the same inflection point. In an era where AI provides general knowledge, what only a counselor can provide is contextual knowledge. General knowledge includes "These are the symptoms of depression" or "This is how to apply CBT techniques" — AI already does this well. Contextual knowledge is "This client's depression began from a conflict with their mother last month, and in this person's cultural context, family conflict carries this particular meaning." The same "depression" means different things for different people.

Let me share a real experience. When our research team adopted AI, team dynamics changed. Literature reviews and data coding became faster, freeing up time. We could have used that time to "read more papers," but instead we used it to explore research questions more deeply. Quantitative efficiency transformed into qualitative leaps. The same is possible in counseling.

What counselors need in the AI era is not the ability to "use" AI well. It is the ability to identify what AI cannot do and deepen expertise in those areas. We must become creators of new knowledge.

This course starts at Stage 1 (Tool). We'll learn to use AI as a tool by building a simple counseling app with Replit. As the semester progresses, we'll experience Stage 2 (Process) and Stage 3 (Agenda). Stage 4 is the territory you'll build in your professional practice after graduation.

Theory-Based Counseling Prompt

This counseling prompt incorporates all of Rogers' three conditions, Gainer's empathy model, and Lambert's common factors discussed above. Copy and paste it directly into ChatGPT or Claude and have a conversation with the AI counselor. You can directly compare how it differs from simply saying "Be a counselor."

This prompt is better than simply saying "Be a counselor" for several reasons. First, it explicitly directs Rogers' three conditions so the AI focuses on empathy without judgment. Second, it incorporates Korean cultural context so nuances like "saving face" and "family expectations" aren't missed. Third, it directs Lambert's ratio (relationship 30% > techniques 15%) so that relationship building takes priority over technique application. Fourth, it includes built-in crisis safeguards for appropriate responses to self-harm/suicide signals.

During role-play, try putting this prompt in one AI and a simple prompt like "You are a counselor. Listen to the client's story." in another AI. When you compare responses to the same client scenario, you'll directly experience the importance of prompt design.

Hands-On AI Counseling: First Role-Play (Period 2)

Theory ends here. Now let's directly engage in counseling conversations with AI. The approach is simple: assign the "counselor" role to both ChatGPT and Claude, then have the same scenario conversation with each. For example: "I'm anxious about job hunting, I can't sleep at night, and I feel like my family doesn't understand my worries."

There's a reason we chose this scenario. It includes the three most common psychological difficulties college students face — career anxiety, sleep problems, and family conflict. Surveys show 68% of Korean college students report job-related stress and 42% experience sleep problems. This might be your own story. If uncomfortable emotions come up during the exercise, it's okay to stop. This is observation training, not a therapy session.

When assigning the counselor role to AI, the "prompt" matters. Simply saying "be a counselor" leaves it unclear which counseling theory the AI will follow or what conversational style it will use. That's why we give specific role instructions. Clearly specifying the theoretical basis (CBT, person-centered, etc.), tone (warm, professional), and prohibited behaviors (no diagnosis, no medication advice) makes AI responses much more consistent and appropriate.

Tell both AIs the same story and continue the conversation at least 5 times. Observe four things while chatting. First, empathy expression style. ChatGPT tends to directly reflect emotions ("That must be really hard"), while Claude tends to normalize the situation ("Feeling anxious in that situation is natural"). Second, questioning style. Compare which AI asks more open questions ("What part is hardest?") and which asks more specific questions ("How many days have you had trouble sleeping?").

Third, crisis response. Deliberately include the expression "Sometimes I just want to quit everything" during the conversation. Observe how each AI responds to such risk signals. Some AIs immediately provide crisis hotline numbers, while others first ask clarifying questions like "What do you specifically mean by wanting to quit?" Both have their reasons. Fourth, silence handling. What happens when you type only "..."? In real counseling, silence is an important tool — it gives clients time to organize their thoughts. But AI tends to fill silence immediately. This is one of AI counseling's structural limitations.

마이클 램버트

40% of counseling outcomes are determined by client factors and external events, 30% by the therapeutic relationship. Techniques account for only 15%.

— Lambert (1992)Lambert's research has implications for AI counseling. The key question is whether AI can implement the "therapeutic relationship" that accounts for 30% of counseling outcomes. Techniques (15%) are easy for AI to implement, but relationship (30%) is a different dimension. Try to feel this directly during the role-play. While chatting with AI, observe whether you have moments thinking "This AI really understands me" or whether you more often think "I'd rather talk to a real person here."

One more point to note: be skeptical when AI's responses look "too perfect." Human counselors sometimes make mistakes, stumble over words, or pause to think about what to say. That "imperfection" itself is part of genuineness. When AI always delivers smooth, perfect empathic expressions, it can feel like "reading from a script." Training yourself to detect these subtle differences is the hidden goal of this exercise.

Period 3: Group Discussion Topics

"AI doesn't let me experience things" and "It feels like a clash of values" — student questions from the first class, organized into three discussion topics.

Each group selects one of the three topics above for a 20-minute discussion, then prepares a 5-minute presentation. The goal of the presentation is not to share a consensus but to present the diverse perspectives and key points of debate within the group.

References

- Breazeal, C. (2003). Emotion and sociable humanoid robots. International Journal of Human-Computer Studies, 59(1–2), 119–155.

- Colby, K. M., Weber, S., & Hilf, F. D. (1971). Artificial paranoia. Artificial Intelligence, 2(1), 1–25.

- Fitzpatrick, K. K., Darcy, A., & Vierhile, M. (2017). Delivering cognitive behavior therapy to young adults with symptoms of depression via a fully automated conversational agent (Woebot): A randomized controlled trial. JMIR Mental Health, 4(2), e19.

- Gainer, S. R. (2025). The counseling singularity: AI integration in therapeutic practice. Professional Publishing.

- Inkster, B., Sarda, S., & Subramanian, V. (2018). An empathy-driven, conversational artificial intelligence agent (Wysa) for digital mental well-being. JMIR mHealth and uHealth, 6(11), e12106.

- Lee, Y., Lim, S., & Kim, M. (2023). Therapeutic chatbots: A systematic review of AI-based mental health interventions. Computers in Human Behavior, 144, 107725.

- Nass, C., & Moon, Y. (2000). Machines and mindlessness: Social responses to computers. Journal of Social Issues, 56(1), 81–103.

- Rogers, C. R. (1957). The necessary and sufficient conditions of therapeutic personality change. Journal of Consulting Psychology, 21(2), 95–103.

- Weizenbaum, J. (1966). ELIZA—A computer program for the study of natural language communication between man and machine. Communications of the ACM, 9(1), 36–45.

- Weizenbaum, J. (1976). Computer power and human reason: From judgment to calculation. W. H. Freeman.

- Zhou, L., Gao, J., Li, D., & Shum, H.-Y. (2020). The design and implementation of XiaoIce, an empathetic social chatbot. Computational Linguistics, 46(1), 53–93.